How many bits would you need to count to 1000?

Using the above formula you’ll see that the smallest four-digit number, 1000, requires 10 bits, and the largest four-digit number, 9999, requires 14 bits. The number of bits varies between those extremes. For example, 1344 requires 11 bits, 2527 requires 12 bits, and 5019 requires 13 bits. Why does this occur?

How many bits in binary would you need to count to 1000?

1000 in binary is 1111101000. Unlike the decimal number system where we use the digits 0 to 9 to represent a number, in a binary system, we use only 2 digits that are 0 and 1 (bits). We have used 10 bits to represent 1000 in binary. In this article, we will show how to convert the decimal number 1000 to binary.

How many bits do you need to count to 500?

If you need to represent 500 discrete values, you need 9 bits.

How do you figure out how many bits you need?

So, if you have 3 digits in decimal (base 10) you have 103 = 1000 possibilities. Then you have to find a number of digits in binary (bits, base 2) so that the number of possibilities is at least 1000, which in this case is 210 = 1024 (9 digits isn’t enough because 29 = 512 which is less than 1000).

How high could you count with 10 bits?

Using 10 bits we can represent 1024 numbers.

What is the binary of 10000?

10000 in binary is 10011100010000.

How do you write 2000 in binary?

2000 in binary is 11111010000.

How many bits would be needed to count 21?

Answer. 5 bits are required for 15, 19 and 21. You use log base 2. log base 2 of decimal number gives number of digits minus 1.

How many total bits do you need to represent 512 numbers?

How many total bits do you need to represent 512 (twice as many) numbers? 9. ASCII is a character-encoding scheme that uses a numeric value to represent each character. For example, the uppercase letter “G” is represented by the decimal (base 10) value 71.

How many bits does one need to encode all 26 letters?

If you want to represent one character from the 26-letter Roman alphabet (A-Z), then you need log2(26) = 4.7 bits.

How many bits would be needed to count all the students in class today?

How many bits would you need to count all of the students in class today? If you have 12 students in class you will need 4 bits. You just studied 16 terms!

How many bits would be needed to count 30 students?

If you use 4 bytes per characters you need at least 120 bytes, and possibly some bytes to indicate the length and other possible meta data. In Java you could use 160 bytes for a 30 character String.

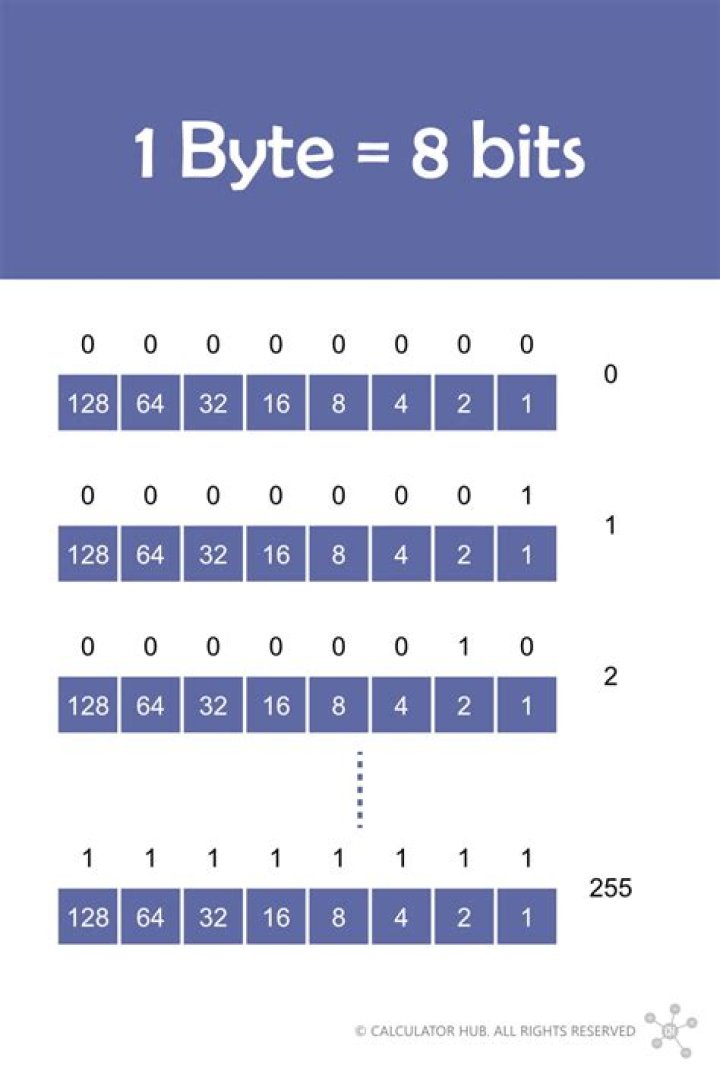

What is the value of a bit?

A bit has a single binary value, either 0 or 1. Although computers usually provide instructions that can test and manipulate bits, they generally are designed to store data and execute instructions in bit multiples called bytes. In most computer systems, there are eight bits in a byte.

How many bits are needed to represent 16?

The most common is hexadecimal. In hexadecimal notation, 4 bits (a nibble) are represented by a single digit. There is obviously a problem with this since 4 bits gives 16 possible combinations, and there are only 10 unique decimal digits, 0 to 9.

What is the minimum number of bits needed to represent 16 things?

For the same reason that 4 bits are needed to represent 16 possible numbers. For the same reason that 3 bits are needed to represent 8 possible numbers. For the same reason that 2 bits are needed to represent 4 possible numbers.

How many bits does it take to store 256 in binary?

256 in binary is 100000000. Unlike the decimal number system where we use the digits 0 to 9 to represent a number, in a binary system, we use only 2 digits that are 0 and 1 (bits). We have used 9 bits to represent 256 in binary.

Related Archive

harry potter trivia show host, latest free online harry potter movies, best HD videos you should watch in 2022 – 2023

harry potter uniform pattern, latest free online harry potter movies, best HD videos you should watch in 2022 – 2023

harry potter vans ebay, latest free online harry potter movies, best HD videos you should watch in 2022 – 2023